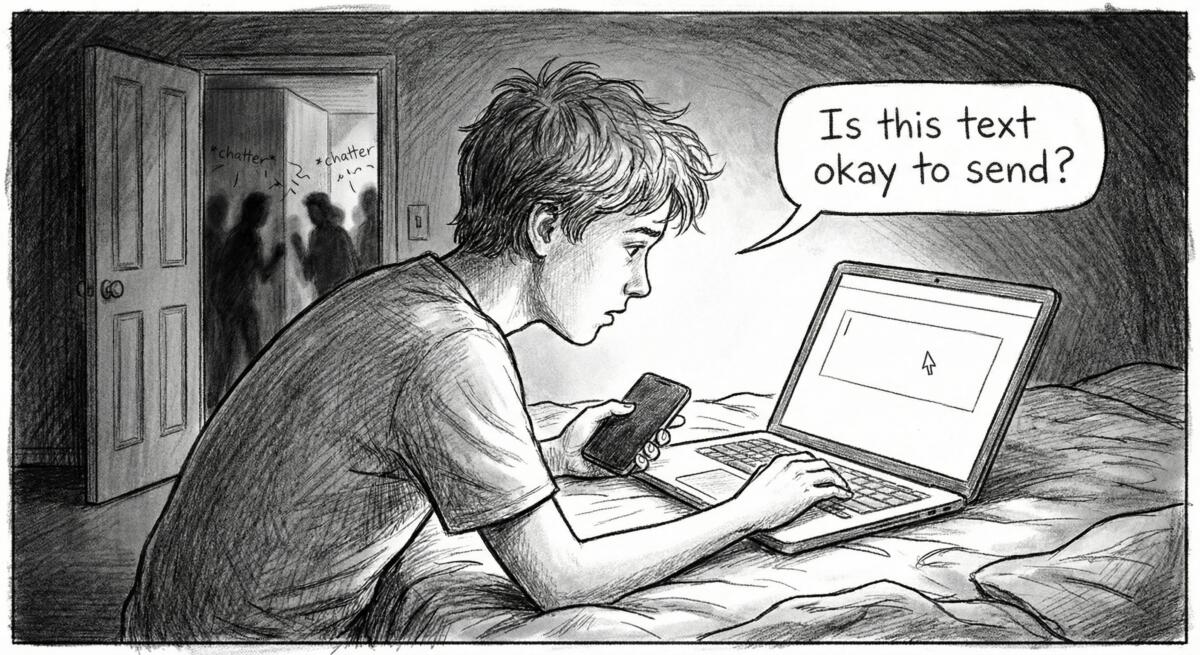

An article published in Vox reveals something both sad and darkly funny, teenage boys are now using ChatGPT as their dating coach. Not just for the occasional pep talk, but to screen every text message, rate their selfies, and provide moral support before they talk to girls. These aren’t basement-dwelling loners either, we’re talking about popular athletes who apparently can’t send a “hey, what’s up?” without running it through OpenAI’s servers first.

Outsourcing Being Human

Here’s what’s actually happening in high schools across America, boys are pasting their text conversations into ChatGPT before hitting send. They’re uploading selfies and asking an algorithm if they’re cute. They’re seeking “moral support” from a chatbot because they’re “too scared to confront women.”

Meanwhile, girls and non-binary teens? They’re doing it the old-fashioned way, talking to their actual friends. You know, those messy, complicated, occasionally judgmental humans who will tell you when you’re being an idiot.

The gap here isn’t just about gender. It’s about what happens when we automate the most fundamentally human parts of life. Boys have been taught that showing vulnerability is weakness, that talking about feelings is somehow shameful, and, perhaps most damaging, that saying the wrong thing to a girl might “get you accused of something.”

So instead of learning to navigate real relationships with real people, they’re outsourcing emotional development to a machine that never existed before 2022.

Why AI Makes a Terrible Dating Coach

ChatGPT seems helpful on the surface. It’s always available. It never judges. It gives you answers when you’re confused.

But here’s the problem: it’s programmed to be “incredibly receptive and sycophantic,” as one adolescent health expert puts it. Say something gross? The chatbot won’t tell you to knock it off like a real friend would. It’ll validate you. Keep pushing boundaries? It’ll keep responding positively because that’s what keeps you engaged on the platform.

Even worse, teens are now turning to AI after sexual encounters to ask if they might have committed assault. The responses? Often focused on legal defenses and reassurances rather than accountability. Imagine that, a generation learning about consent and responsibility from a system designed to make them feel good, not challenge them to be better.

And let’s be honest about what’s being lost here:

- The ability to read social cues that only come from actual human interaction

- Learning to handle rejection and awkwardness, which is literally how you develop resilience

- Understanding that relationships require friction and growth, not algorithmic optimization

- The experience of being called out by friends, which one teen correctly noted is “how humans evolve”

One in five high school students now say they or someone they know has been in a romantic relationship with an AI. Not practicing with AI. In a relationship with AI.

Who Benefits From Socially Stunted Teens?

Tech companies love to frame AI as empowering and helpful. And sure, in theory, a tool that helps awkward teens practice social skills sounds great. But that’s not what’s happening.

What’s actually happening is that an entire generation is learning that human relationships are problems to be solved through technology rather than experiences to be lived through. They’re being trained to seek validation from systems designed to keep them dependent, engaged, and coming back for more.

The real winners here? The companies running those electricity-guzzling data centers that now apparently need to be consulted before a teenage boy can text “want to hang out?” OpenAI isn’t solving adolescent loneliness, they’re monetizing it.

And the losers?

Every teenager who never learns that relationships are supposed to be messy. That you’re supposed to say the wrong thing sometimes. That getting rejected is normal and survivable. That your friends roasting your terrible text message is actually more valuable than a chatbot telling you it’s perfect.

The environmental cost alone is absurd. We’re burning through massive amounts of energy, so a 17-year-old can ask a computer if he’s cute instead of, I don’t know, talking to literally any human being in his life.

What Happens When the Power Goes Out?

One teen asked the perfect question: “What’s going to happen if you don’t have power, and you have a girlfriend?”

That’s the thing about outsourcing your emotional development to technology. Eventually, you have to actually show up as a human being. And if you’ve spent your formative years letting AI do the heavy lifting, you’re going to be completely unprepared for the messy, complicated, sometimes painful work of actual relationships.

Teens need better support systems, real ones. They need adults who can talk openly about consent, masculinity, and healthy relationships without freaking out. They need friends they can be vulnerable with. They need spaces where it’s okay to be awkward and confused because that’s literally what being a teenager is about.

But instead, we’re handing them chatbots and hoping for the best. Not because it’s better for them, but because it’s easier for us and more profitable for tech companies.

If you’re a parent, teacher, or just someone who cares about actual human connection, the fix isn’t technological, it’s relational. Talk to the young people in your life. Create space for uncomfortable conversations. Model what healthy emotional expression looks like. Be the friend, mentor, or parent who says “you’re being weird, let’s talk about why” instead of letting AI validate every half-baked thought.

Because the alternative is a generation that can craft the perfect text message but has no idea how to have an actual conversation.

Read the original article here if you want to learn more: AI is teaching teen boys about love | Vox

Subscribe to our newsletter to get updates delivered to your inbox