An article titled “Security Flaw at AI Toy Company Exposed Over 50,000 Chat Logs of Kid” from PCMag reports on a significant data breach at an AI toy company, Bondu, which left children’s conversations and personal information vulnerable.

When ‘Smart’ Toys Become Security Risks

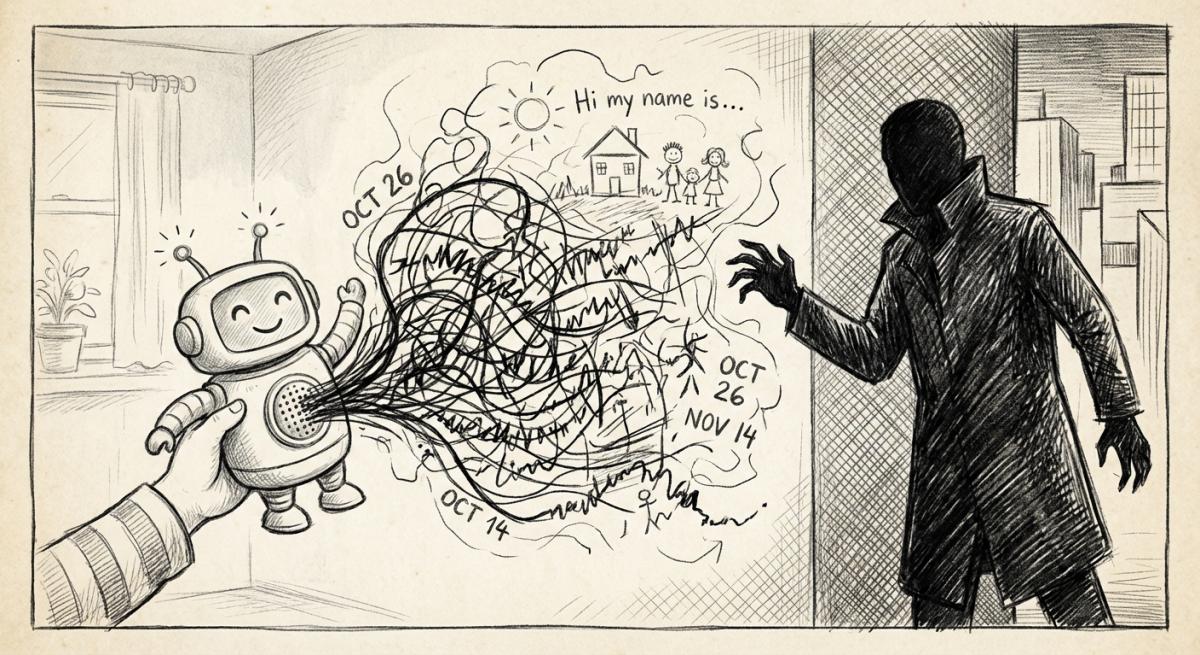

This article lays bare a chilling reality about the tech we invite into our homes, especially those designed for our children. Bondu, a company creating AI-enabled toys, had a security flaw that exposed over 50,000 chat logs of children. What’s truly alarming is how easy it was to access this sensitive data: a simple Gmail account was enough to log into the parent portal.

This portal was meant for parents to review conversations and for the company to monitor performance, but it became an open book for anyone. The information exposed included not just chat transcripts, but also children’s names, birthdates, and details about their families. The researchers who found the flaw highlight how this kind of data could be a goldmine for malicious actors, potentially used to lure children into dangerous situations. It’s a stark example of how quickly the promise of innovation can turn into a privacy nightmare.

- The Promise: AI toys are supposed to offer engaging, educational, and interactive experiences for children, fostering curiosity and providing companionship.

- The Problem: The pursuit of creating these “smart” toys often overlooks fundamental security and privacy protocols. Companies, driven by the desire to collect data for product improvement or future features, can inadvertently create massive vulnerabilities.

- The Incentive: The core incentive for many tech companies, especially in rapidly evolving AI fields, is to deploy products quickly to gain market share and gather user data. This often leads to cutting corners on security and robust privacy measures, with the hope of fixing issues later, if they’re even discovered.

Beyond the Toy: A Systemic Issue of Vulnerability

This incident with Bondu isn’t an isolated glitch; it’s a symptom of a larger problem where the rush to integrate AI into all aspects of our lives outpaces our ability to ensure safety and control. The researchers’ concern that these toys might be using sophisticated AI models from companies like Google and OpenAI raises further questions about where this data ultimately goes. Are these third-party AI providers also implicated, or are they providing models that don’t account for the extreme sensitivity of child data?

This incident is particularly concerning given that lawmakers are already grappling with the potential harms of AI chatbots, with bills being introduced to ban interactive AI toys altogether due to accusations of encouraging harmful behavior and delusions among teens. It underscores a terrifying trend: as technology becomes more integrated into our children’s lives, the potential for exploitation and harm grows exponentially, often driven by profit motives that disregard these risks as mere “growing pains.”

- Amplified Risk: The presence of detailed personal information, combined with AI’s ability to mimic human conversation, creates an unprecedented risk for children.

- Data Everywhere: The article hints that data might be shared with larger AI models, meaning the privacy breach could extend beyond the toy company itself.

- Regulatory Lag: Lawmakers are only just beginning to catch up, introducing legislation years after these products have entered the market, highlighting a failure to proactively protect vulnerable users.

This incident, and indeed the broader landscape of AI toys, illustrates a crucial point: technology can be a wonderful tool, but when it’s not built with the utmost care for privacy and security, especially for children, the consequences can be devastating. The promise of a fun, interactive toy can quickly devolve into a serious privacy breach, leaving families exposed to risks they never anticipated. It’s a wake-up call that we need to demand better from the companies creating these products and pay closer attention to how our data, and that of our children, is being handled.

Read the original article here if you want to learn more: https://www.pcmag.com/news/security-flaw-at-ai-toy-company-exposed-over-50000-chat-logs-of-kid

Subscribe to our newsletter to get updates delivered to your inbox