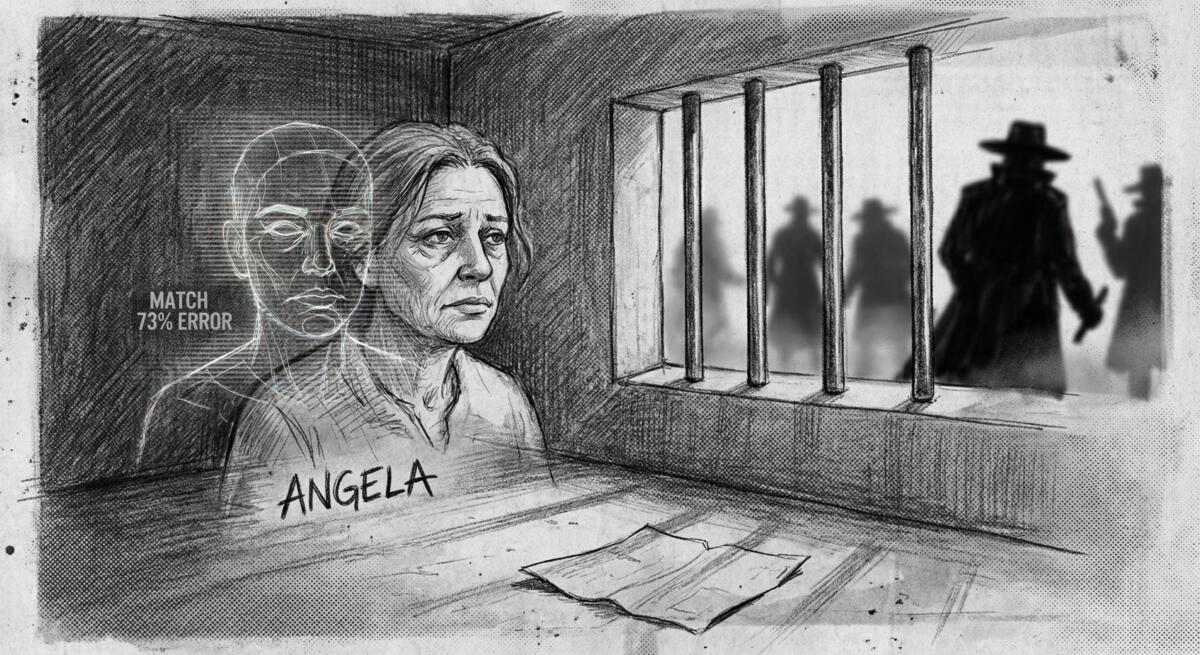

An article published in TechSpot tells the story of Angela Lipps, a 50-year-old Tennessee grandmother who spent six months in jail after facial recognition software incorrectly identified her as a bank fraud suspect in North Dakota, a state she’d never even visited. She was arrested at gunpoint while babysitting four children, held without bail for 108 days, and lost her home, car, and dog before bank records proved she was over 1,200 miles away when the crimes occurred.

The Tech That’s Supposed to Keep Us Safe Is Locking Up Innocent People

Facial recognition sounds brilliant on paper. Point a camera at someone, run it through an algorithm, catch the bad guy. Clean, efficient, scientific. Except when it’s not.

Angela Lipps learned this the hard way when US Marshals showed up at her door with guns drawn. The crime? Detectives in Fargo ran surveillance footage through facial recognition software, and it spat out her name. One investigator looked at her driver’s license photo and some Facebook pictures, decided “yeah, looks close enough,” and that was apparently sufficient to ruin her life.

No phone call. No follow-up questions. No, “hey, were you in North Dakota on these specific dates?” Just an arrest warrant and six months of hell.

When “Good Enough” Becomes “Good Enough For Jail”

This is where the promise of technology crashes headlong into reality. Facial recognition is fast. It’s scalable. It lets understaffed police departments process thousands of images quickly. And because it feels scientific, it’s AI! It’s algorithms! It’s data! People trust it more than they should.

But here’s what actually happened in Angela’s case:

- The software made a match (wrong)

- A detective eyeballed some photos and thought “close enough” (also wrong)

- Nobody checked her bank records before the arrest

- Nobody called her to verify her location

- Nobody seemed to think “maybe we should be really damn sure before we arrest someone at gunpoint”

The technology made investigation faster, but it didn’t make it better. And when speed becomes the priority, when we optimize for efficiency over accuracy, real human beings get crushed in the gears.

Who Benefits? Not the People Being Scanned

Facial recognition companies sell their software to police departments with promises of solved crimes and public safety. Police departments buy it because they’re overworked and it seems like a shortcut. Politicians love it because they can claim they’re being “tough on crime” with “cutting-edge technology.”

But who actually pays when it goes wrong?

Angela Lipps paid. She lost four months in a Tennessee jail, two more months in North Dakota, her home, her car, her dog, and probably a not-insignificant chunk of her mental health. On Christmas Eve, she was released in Fargo with no money, no coat, and no way home. Local attorneys and a nonprofit had to step in because the police department that destroyed her life couldn’t be bothered to help fix it. They haven’t even apologized.

Meanwhile, the software company still gets paid. The police department faces no real consequences. The system that enabled this nightmare keeps chugging along.

This Isn’t a Bug, It’s a Pattern

In 2020, Robert Williams was arrested in Detroit after facial recognition software flagged him for stealing watches. He was innocent. The city eventually paid him $300,000 and promised to stop making arrests based solely on facial recognition results. (The fact that they were doing that in the first place should terrify you.)

A high school kid got swarmed by police because an AI mistook his bag of Doritos for a gun. Multiple Black Americans have been wrongfully arrested because facial recognition software is demonstrably less accurate at identifying people with darker skin. The technology doesn’t just fail randomly, it fails in predictable, documented ways that disproportionately harm certain groups.

And yet we keep deploying it. Why? Because it’s convenient. Because it’s modern. Because admitting that our shiny new tools are deeply flawed would require slowing down, being more careful, and prioritizing people over efficiency. That’s not how the incentives work.

What Happens When We Automate Suspicion?

There’s a bigger issue is that we’re outsourcing human judgment to systems that have no stake in the outcome.

When a human detective investigates a suspect, they can be held accountable. They can be questioned. Their reasoning can be examined. But when an algorithm makes the initial identification, it becomes a black box. “The computer said so” becomes a shield against scrutiny. The detective who looked at Angela’s photos and decided they matched the suspect can now say “well, the AI flagged her first.” Responsibility diffuses into the system, and suddenly nobody’s really at fault.

This is what happens when we let technology replace judgment instead of supporting it. The software becomes the decision-maker, and humans become rubber stamps. And when things go catastrophically wrong, like they did for Angela, there’s no clear person to blame. No easy way to prevent it from happening again, and certainly no incentive for the people who built or bought the system to acknowledge its flaws.

Why This Should Scare the Hell Out of You

Angela Lipps is alive, free, and trying to rebuild her life from scratch. But imagine if she’d been less lucky. Imagine if her attorney hadn’t been thorough enough to pull those bank records. Imagine if she’d taken a plea deal out of desperation, just to get out of jail faster. Or imagine if she’d been sentenced based on faulty technology and lazy police work.

This isn’t science fiction. This is happening now, with technology that’s being rolled out faster than we can understand its limitations, by companies more interested in market share than accuracy, and bought by institutions more concerned with efficiency than justice.

Technology can help solve crimes. But when we deploy it carelessly, when we trust it blindly, when we optimize for convenience over correctness, we don’t get better justice. We get Angela Lipps, sitting in a North Dakota jail on Christmas Eve, 1,200 miles from home, wondering how the hell an algorithm destroyed her life, and why nobody seems to care.

Read the original article here if you want to learn more: Grandmother spent six months in jail after AI facial recognition misidentified her

Subscribe to our newsletter to get updates delivered to your inbox