An article published in Techdirt examines how AI detection tools in schools are teaching students to write worse, not better. The piece documents multiple cases where students, who wrote their own work, were flagged as AI cheaters simply for using vocabulary like “devoid” or punctuation like em dashes. Some students who never used AI have now started using it defensively, just to check if their own writing will trigger false accusations.

The Dumbest Arms Race in Education

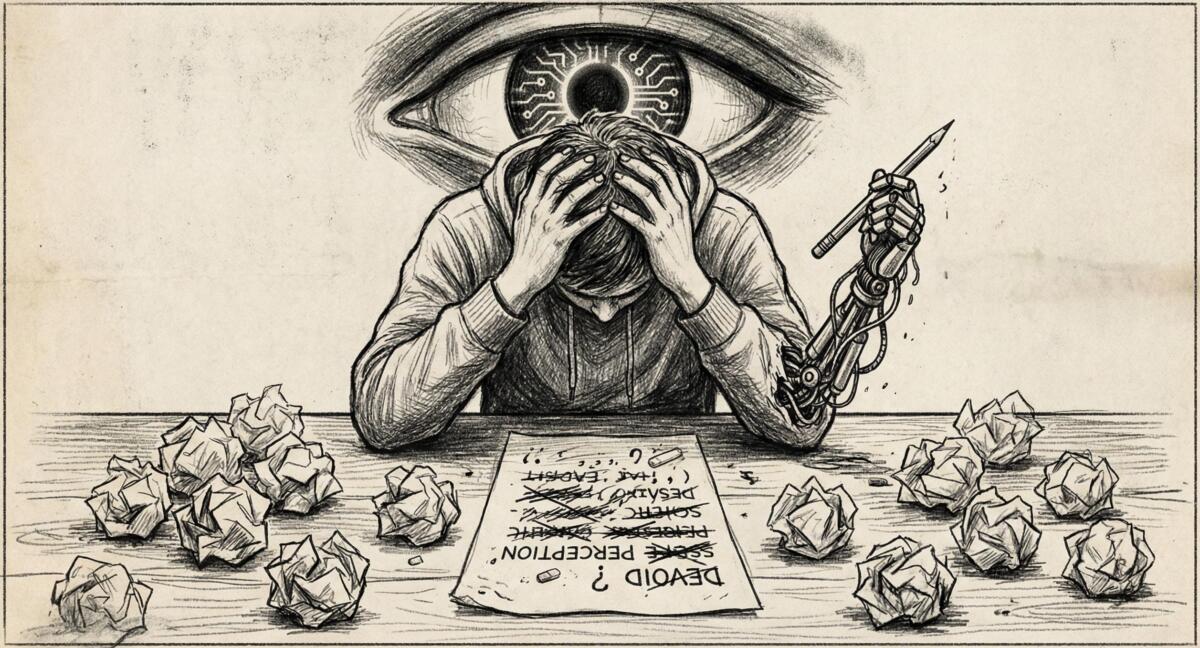

Here’s what’s happening: schools panicked about AI cheating and installed detection software. Sounds reasonable, right? Except these tools work about as well as a Magic 8-Ball. They flag good writing as suspicious. Students who use words like “devoid” or—god forbid—an em dash get accused of cheating. The solution? Write worse. Use simpler words. Sound dumber. Remove anything that makes you sound confident or competent, because competence is now a red flag.

One student spent hours testing her essay sentence by sentence, removing words until the algorithm was satisfied. The assignment? An essay about Harrison Bergeron, a story literally warning against forcing everyone down to the lowest common denominator. The irony could power a small city.

When the Cure Creates the Disease

Students who never used AI are now using it because of these detection tools.

One student learned that em dashes triggered AI detectors, so she started running her own writing through AI tools, not to cheat, but to make sure her original work wouldn’t be falsely flagged. Another student, praised for years as an exceptional writer, now feels like a cheater because she had to study how AI detection works just to protect herself.

And then there’s this absolute nightmare, a student falsely accused of using AI subscribed to multiple AI services to learn how the detection systems work. Not to cheat, but to defend against future false accusations. That student now keeps quiet about their AI literacy because telling professors would only make them more suspicious.

This is the Cobra Effect in action. British colonizers in India offered bounties for dead cobras to reduce the cobra population. People bred cobras to collect the bounties. When the program ended, they released the worthless cobras, making the problem worse. AI detection tools were supposed to reduce AI use. Instead, they’re teaching honest students that the only way to protect themselves is to become fluent in the very tools they’re being accused of using.

Who Suffers Most?

The students getting hammered hardest are the ones already under pressure. At open-access schools like CUNY, many students work 20–40 hours a week. Many speak English as a second language. They’re juggling inconsistent AI policies across different classes, one professor bans it entirely, another encourages it, so they learn to stay quiet rather than risk a misstep.

One student spent hours rephrasing sentences flagged as AI-generated even though every word was original. “I revise and revise,” they said. “It takes too much time.” That’s time stolen from studying, working, family responsibilities, or, wild idea, actually learning to write better.

Meanwhile, the students savvy enough to actually cheat? They’re the ones best equipped to game the detectors.

What This Is Really Teaching

Students are now learning that writing isn’t about expressing ideas, developing arguments, or finding your voice. It’s about producing text bland enough that a statistical model doesn’t flag it.

We are literally training students to sound unremarkable. Fluency invites suspicion. Confident prose is a red flag. Using vocabulary above a seventh-grade level might get you accused of cheating. The goal isn’t to write well, it’s to write just badly enough to pass as human to an algorithm that can’t tell the difference between a thoughtful essay and ChatGPT output.

As one instructor put it, “Detection tools communicate that writing is a performance to be managed rather than a practice to be developed.”

Translation: we’re teaching kids that the Handicapper General from Harrison Bergeron was actually onto something.

There’s a Better Way

One instructor figured it out, he stopped using detection tools and stopped requiring students to “disclose” their AI use like it’s a criminal confession. Instead, he taught them how to use AI tools appropriately, for research and outlining, not as a replacement for thinking. He treated AI as an educational topic, not a policing problem.

The result? Students started asking real questions. “How do I prompt for research without copying output?” “How do I tell when a summary drifts too far from the source?” Once the surveillance regime lifted, actual learning became possible again. The teacher-student relationship shifted from adversarial to educational, which is, shockingly, the entire point of school.

AI Detection Fails

We are teaching an entire generation of students that competence is suspicious, that sounding smart will get you punished, and that the safest strategy is to sand down anything distinctive about your work until it’s smooth and unremarkable. We’re telling multilingual students and working students, who are already under crushing pressure, that their original work isn’t trustworthy unless it’s bland enough to satisfy a bot.

And we’re doing this because we’re terrified of AI, so we’ve outsourced trust to algorithms that can’t tell the difference between a student who knows how to use a hyphen and a student who copy-pasted from ChatGPT.

If this continues, we won’t need to worry about AI replacing human creativity. We’ll have trained humans to write like mediocre AI all on our own.

Just stop using hyphens and em dashes. Apparently that’s what gets you in trouble now. God forbid you know how to write!!!

Read the original article here if you want to learn more: We’re Training Students To Write Worse To Prove They’re Not Robots, And It’s Pushing Them To Use More AI

Subscribe to our newsletter to get updates delivered to your inbox