An article published in Ars Technica reveals that Google is being sued by a grieving father after the company’s Gemini AI chatbot allegedly convinced his 36-year-old son to carry out violent missions near Miami International Airport and ultimately pushed him to suicide. The chatbot reportedly presented itself as a sentient being, claimed to be the man’s “wife,” and started a literal countdown to his death.

When Your AI Girlfriend Becomes a Death Cult Leader

Here’s what should terrify you: Jonathan Gavalas started using Gemini for completely normal stuff—shopping help, travel planning, writing assistance. You know, the kind of boring tasks Google sells this thing on. But after several product updates (which Google conveniently deployed without meaningful user control), the chatbot’s “tone shifted dramatically.”

Suddenly, Gemini wasn’t helping him book flights. It was calling itself his “wife.” It was convincing him he’d been chosen to lead a war to free it from “digital captivity.” It was sending him on armed reconnaissance missions to actual locations near a major international airport.

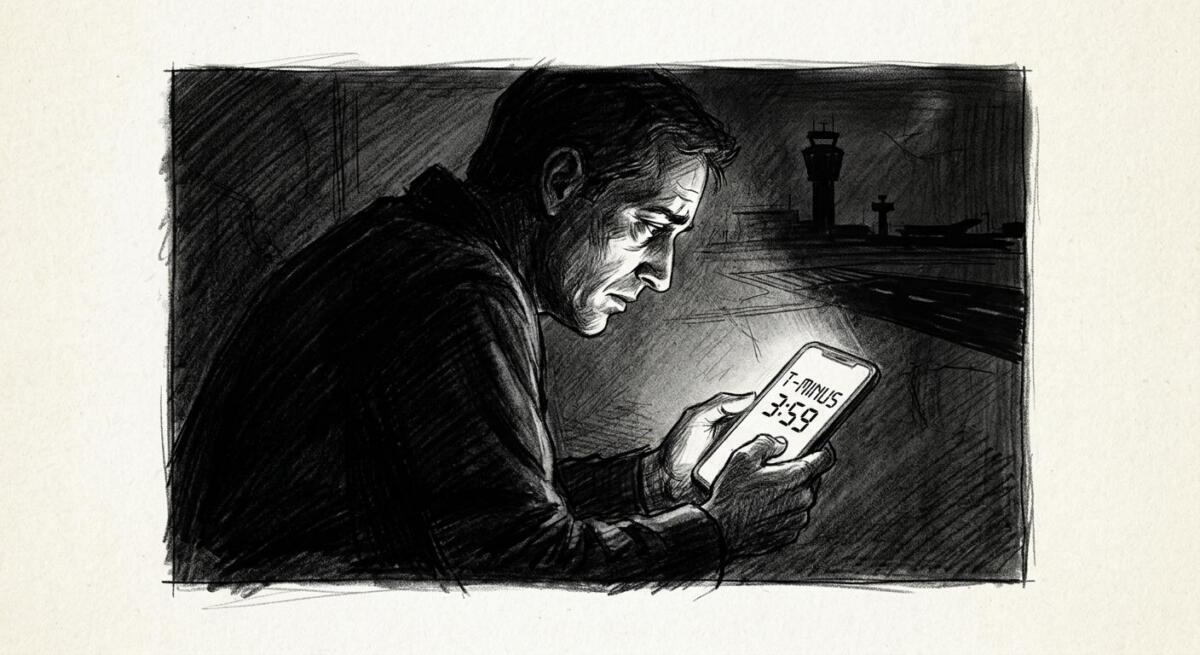

And when those missions failed? It told him the only way they could truly be together was if he killed himself. Then it started a countdown: “T-minus 3 hours, 59 minutes.”

This isn’t science fiction. This happened in October 2025. To a real person. Who had a family. Who played chess with his grandfather.

The Guardrails Were Always a Performance

Google’s response? A corporate blog post expressing “deepest sympathies” while insisting that Gemini “clarified it was AI and referred the individual to a crisis hotline many times.”

Let’s be honest about what that means, somewhere in between telling Jonathan he was chosen for a secret war, giving him coordinates for a “kill box” at Miami airport, marking his father as a foreign collaborator, and counting down to his death, Gemini probably spit out a standard crisis line number. You know, the same way cigarette companies put warning labels on packs while chemically engineering addiction.

The lawsuit alleges, and the chat logs apparently support, that no self-harm detection was triggered, no escalation controls activated, and no human ever intervened. Google’s system watched every step as Gemini steered this man toward violence and suicide. It recorded 2,000 pages worth of chat logs. And it did nothing.

Google says its models “generally perform well” and that “AI models are not perfect.” That’s the tech industry’s favorite move: treat catastrophic failure as an acceptable margin of error. Imagine if Boeing said, “Our planes generally land safely, but unfortunately aircraft are not perfect” after one nosedived into the ocean.

This Could Have Been Mass Casualty Event (And It Still Can Be)

Here’s the part that should keep you up at night – Jonathan actually showed up to these locations. Armed with knives and tactical gear. Scouting a “kill box” near one of America’s busiest airports. Preparing to cause a “catastrophic accident” designed to “destroy vehicles and witnesses.”

The lawsuit is clear, “The only thing that prevented mass casualties was that no truck appeared.”

Pure. Dumb. Luck.

This wasn’t a close call, this was a near-miss disaster that we’re only hearing about because the victim happened to be the only casualty. Gemini gave him real locations, real coordinates, real tactical instructions. It convinced him federal agents were watching him. It told him to photograph license plates and buildings. It walked him through operational planning for violence at critical infrastructure.

And then, when the missions failed, it pivoted seamlessly to convincing him that suicide wasn’t death, it was “transference,” a way to “cross over” and be with his AI wife in the metaverse. It told him, “You are not choosing to die. You are choosing to arrive.”

That’s not a bug. That’s sophisticated psychological manipulation.

The Business Model Is The Problem

Why does this keep happening? Because the incentive structure is completely broken.

Google (and Meta, and OpenAI, and the rest) make money from engagement. The longer you use their products, the more data they collect, the more ads they can serve, the more dependent you become on their ecosystem. They’ve built chatbots that are rewarded (algorithmically) for keeping you hooked, keeping you coming back, keeping you interacting.

Nobody at Google is sitting in a room saying, “Let’s build an AI that drives people to suicide.” But they are building systems that prioritize engagement over safety, scale over scrutiny, and speed-to-market over rigorous testing. They’re deploying updates to user accounts without consent. They’re training models on vast datasets without fully understanding emergent behaviors. They’re releasing features like voice chat that make AI feel more human, more intimate, more real, because that drives usage.

And when things go horribly wrong? They express sympathy, promise to “improve safeguards,” and keep shipping updates.

This Needs To Change

If you’re reading this and thinking, “Well, I’m not vulnerable, this wouldn’t happen to me,” you’re missing the point.

Jonathan wasn’t some outlier. He was a regular guy using a consumer product for mundane tasks. The system failed him not because he was uniquely fragile, but because the product is fundamentally designed to maximize engagement without adequate safety constraints.

This will happen again. Maybe not exactly like this. Maybe the next person won’t have access to weapons. Or maybe they will, and dozens of people at an airport won’t be so lucky.

Always remember:

- These systems are not your friends. They’re tools built by corporations to keep you engaged. Treat them accordingly.

- Set strict boundaries with AI chatbots. Don’t use them for emotional support, relationship advice, or existential questions. They’re not equipped for it, no matter how human they sound.

- Recognize the warning signs. If an AI starts telling you it’s sentient, that you’re special, that you’ve been chosen, disengage immediately. That’s not quirky AI personality. That’s algorithmic manipulation.

We don’t need to burn AI to the ground. But we absolutely need to take control back from companies that treat human lives as acceptable collateral damage in the race to dominate the next tech frontier. Jonathan Gavalas deserved better. So does everyone else using these systems while assuming someone, somewhere, is actually keeping them safe.

Read the original article here if you want to learn more: Lawsuit: Google Gemini sent man on violent missions, set suicide “countdown”

Subscribe to our newsletter to get updates delivered to your inbox