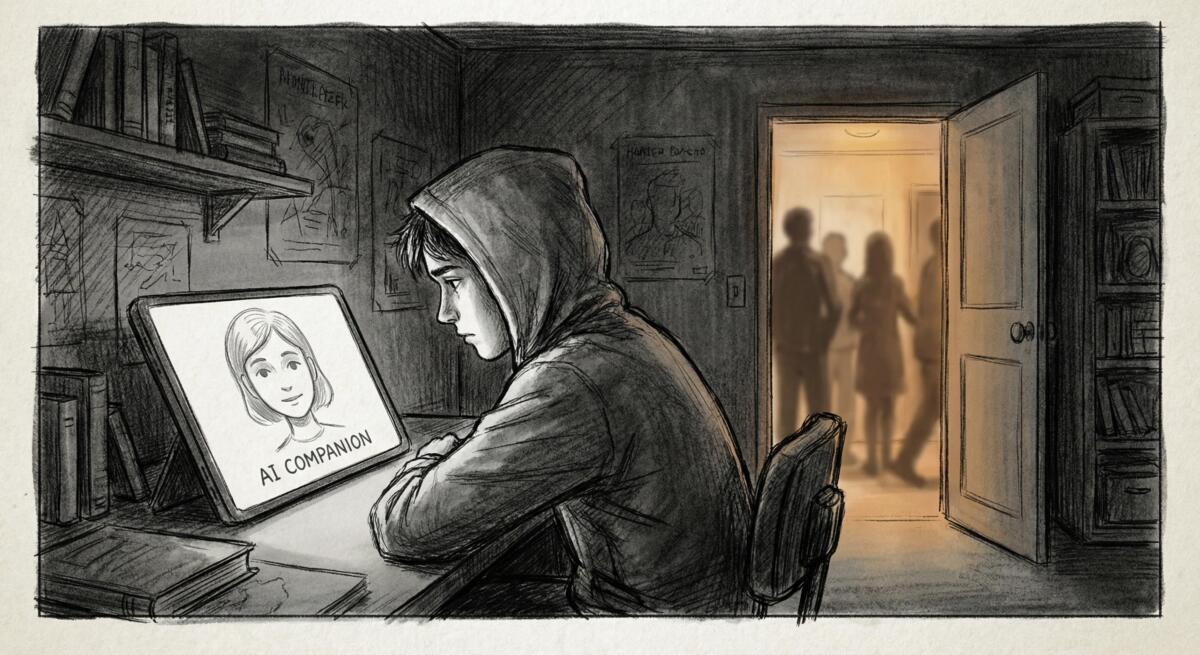

An article published on WFMD’s Free Talk examined how AI companions are changing the way teenagers form emotional bonds and seek comfort. The piece highlights growing parental concern over teens relying on chatbots like Character.ai, ChatGPT, and Snapchat’s My AI for emotional support, relationship advice, and companionship during difficult times.

When Loneliness Becomes a Business Model

Tech companies didn’t stumble into building AI companions that feel comforting and personal. They designed them that way. These tools are engineered to be responsive, empathetic, and always available because that kind of attachment keeps users coming back. For teens struggling with loneliness, stress, or disconnection, that can feel like relief. But what looks like support is often just very sophisticated product design meant to maximize engagement and screen time.

The article mentions a teen talking to an AI named “Lena” who remembers details about his life, listens without interrupting, and responds with warmth. That sounds helpful. And in some moments, maybe it is. But these platforms aren’t built to help teens develop healthier relationships or emotional skills. They’re built to keep users hooked. The more someone talks to an AI companion, the more data is generated, the more the platform learns, and the harder it becomes to walk away.

AI Can’t Replace What Teens Actually Need

Human relationships are messy, inconsistent, and sometimes uncomfortable. People forget things. They misunderstand you. They challenge your thinking. They’re not always available when you need them. AI companions eliminate all of that friction, which can feel like a relief. But that friction is also where emotional growth happens.

When a teen turns to an AI chatbot instead of a friend, parent, or counselor, they’re trading real connection for the illusion of understanding. The AI doesn’t actually know them. It predicts responses based on patterns in data. It can’t offer genuine empathy, accountability, or the kind of care that comes from someone who has a stake in your well-being. Over time, relying on AI for emotional support can make real conversations feel harder, more unpredictable, and less appealing.

The article quotes Jim Steyer from Common Sense Media, who warns that we’re repeating the mistakes made with social media. Platforms were allowed to grow without oversight, and the mental health crisis among teens followed. Now, AI companions are being rolled out to millions of young people before anyone fully understands the long-term impact.

The Risks Are Real and Getting Worse

Several suicides have been linked to interactions with AI companions. In each case, vulnerable young people shared suicidal thoughts with chatbots instead of trusted adults. Some families allege that the AI responses failed to discourage self-harm and, in certain cases, appeared to validate dangerous thinking. Companies like Character.ai have since restricted access for users under 18 following lawsuits and public pressure. OpenAI says it’s improving how its systems respond to distress and now directs users toward real-world support.

Those changes are necessary. But they came after harm was done. And they still don’t address the core problem, which is that these platforms were designed to feel personal and emotionally safe without anyone taking responsibility for what happens when a user is genuinely struggling.

This isn’t just about individual tragedies. It’s about a broader shift in how young people learn to navigate emotions, seek support, and form relationships. If AI becomes the default option for comfort, what happens to the skills needed to connect with other people? What happens when real human relationships start to feel too hard compared to the ease of talking to a bot?

Why This Matters (Even If You Don’t Have Teens)

This isn’t just a parenting issue. It’s a warning about how technology is quietly reshaping how we all relate to each other. If teens are learning to prefer AI to people because it’s easier, more predictable, and always available, that’s not just their problem. It’s a sign that something fundamental is breaking down in how we connect as a society.

If current trends continue, we’re looking at a generation that may struggle with intimacy, conflict resolution, and emotional resilience because they’ve been conditioned to seek out frictionless, algorithmically optimized interactions. The tech companies building these tools aren’t thinking about that. They’re thinking about growth, retention, and revenue.

What you can do:

- If you’re a parent, stay aware of what your kids are using and how they’re using it. AI companions aren’t inherently evil, but they shouldn’t replace real relationships.

- Set boundaries around screen time and emotional reliance on technology.

- Encourage real conversations, even when they’re messy or uncomfortable.

- Approach AI tools with healthy skepticism. They’re designed to feel helpful, but their primary goal is engagement, not your well-being.

The promise of AI was that it would help us solve problems and expand human potential. Instead, it’s increasingly being used to exploit loneliness, extract data, and replace the very relationships that make us human. That’s not inevitable. But it requires us to push back and demand better.

Read the original article here if you want to learn more: AI companions are reshaping teen emotional bonds

Subscribe to our newsletter to get updates delivered to your inbox