In “Giving your healthcare info to a chatbot is, unsurprisingly, a terrible idea”, The Verge reports that OpenAI says more than 230 million people ask ChatGPT for health and wellness advice weekly and highlights the launch of a consumer feature, ChatGPT Health, that encourages users to share sensitive data like diagnoses, medications, and lab results under privacy promises that are largely policy-based rather than legally guaranteed.

The promise: guidance, access, and a more navigable healthcare maze

AI tools can help with health, especially in a system that’s confusing, expensive, and uneven. The article is fair to note why people reach for chatbots: interpreting lab jargon, organizing questions for a doctor visit, tracking habits, and getting basic explanations when appointments are weeks away. For people who are anxious, uninsured, time-poor, or dismissed by clinicians, an always-available “ally” can feel like a relief.

And there’s real potential here: better patient education, fewer administrative burdens, and more confidence walking into the exam room. Used carefully, as a support tool, not a decision-maker, a well-designed assistant could reduce friction in a healthcare system that often seems designed to exhaust people.

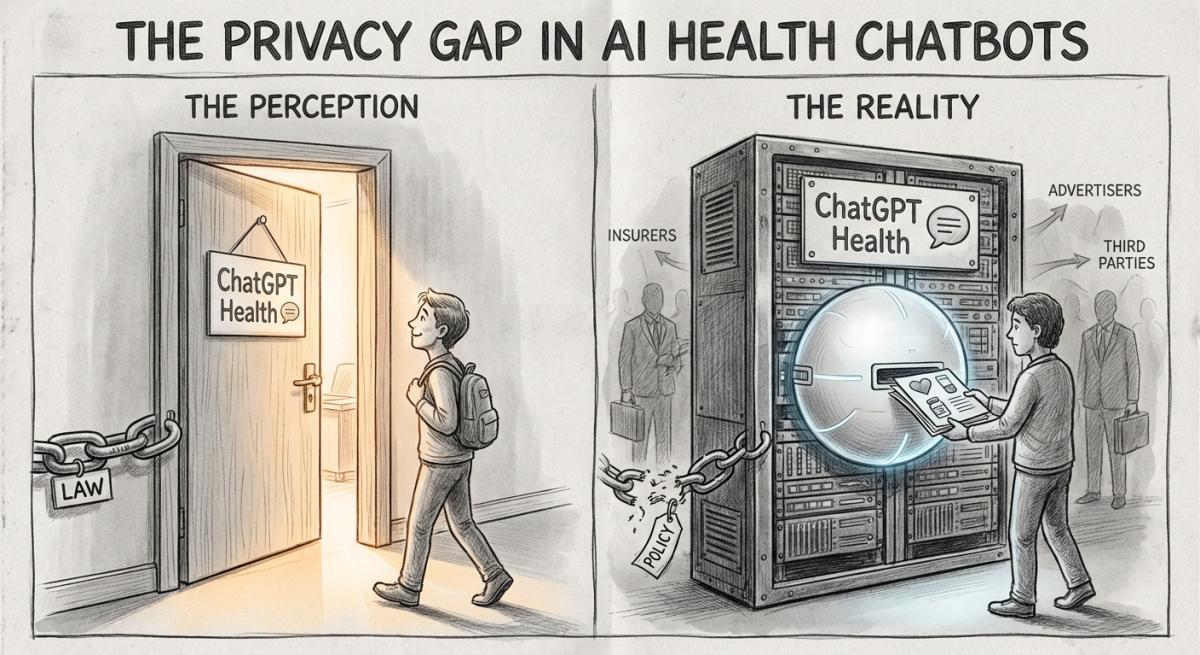

The reality: “trust us” is not a privacy framework

The core warning in the article lands because it’s about incentives and enforcement, not vibes. Medical privacy works (imperfectly) because providers are bound by rules and penalties. Consumer chatbots typically aren’t.

The Verge highlights a key gap: with no comprehensive federal privacy law and uneven state protections, users are often left with whatever a company’s terms and privacy policy currently say, and those can change. That means the protection level is not anchored to your rights; it’s anchored to a product decision.

A few practical problems emerge from that:

- Policy promises aren’t the same as legal obligations. “We won’t use it to train models” is not the same as “we are prohibited from repurposing it.”

- Name confusion creates false safety. The article notes how easy it is to mix up “ChatGPT Health” (consumer) with “ChatGPT for Healthcare” (enterprise/clinical), and people will naturally assume the stricter rules apply to both.

- Data tends to drift toward monetization. Once health data is inside a tech platform, the long-term temptation is obvious: personalization, upsells, partnerships, or future product training—especially when revenue pressure rises.

This is the modern tech pattern: collect now, justify later, apologize if caught, and update the policy when convenient.

Safety theater: disclaimers that dodge regulation while encouraging medical use

The article also calls out something that should bother anyone who still believes tech can be humane: the way companies simultaneously encourage medical use while avoiding medical-device accountability.

OpenAI can say ChatGPT Health isn’t for “diagnosis or treatment,” and that disclaimer helps keep it away from stricter oversight (FDA-style regulation). But the product marketing and suggested uses, interpreting labs, reasoning through treatment decisions, connecting app-based health data, push directly into the space where people will rely on it.

This is where “move fast and break things” becomes dangerous. In medicine, what breaks might be:

- someone delaying care because the chatbot sounds confident,

- someone changing a medication routine based on a persuasive explanation,

- someone misinterpreting risk because the tool presents uncertainty as clarity.

And the article’s examples matter because they show a recurring AI failure mode: confident wrongness, delivered in a calm, authoritative tone that non-experts are primed to trust, especially when they’re scared.

The deeper harm: medical data plus platform logic equals surveillance by another name

What worries me most isn’t just individual bad advice, it’s what happens when healthcare becomes another engagement funnel.

If AI platforms become a default layer between people and healthcare information, we’re effectively normalizing:

- More intimate data extraction (from records, apps, wearables, “memories”)

- More dependency (the chatbot becomes the always-on translator of your body)

- More power asymmetry (a corporation sets the rules for how your health story is stored, processed, and potentially repurposed)

Even if encryption is real, the bigger question is governance: who can access it, under what circumstances, for what secondary uses, and what recourse you have when something goes wrong. “Delete your Health memories” sounds empowering until you remember the average person can’t realistically audit what was retained elsewhere, logged, or learned indirectly.

And looming behind all of this is the scale incentive: health is a massive market, and AI companies are fighting to become the front door. Once that happens, patients don’t just become users, they become inputs.

Why this matters beyond “people who use chatbots”

This affects everyday life because health anxiety, confusing test results, and care delays are nearly universal, and AI companies are positioning themselves as the easiest shortcut in moments when people are vulnerable. If the current trajectory holds, we risk building a system where deeply personal medical data is governed more by product strategy than patient rights. The cost won’t be evenly distributed: people with less access to clinicians and less time to verify answers will quietly absorb the most harm.

A few realistic actions readers can take now: treat health chatbots as a drafting and question-prep tool, not a clinician; avoid uploading identifiable records unless you are certain what legal protections apply; take screenshots/notes you can bring to a real provider rather than “sharing everything” for personalization; and push lawmakers for clear rules that bind consumer AI tools handling health data, not voluntary promises. Technology can help in healthcare, but only if we stop confusing convenience with consent and branding with accountability.

Read the original article here if you want to learn more:

https://www.theverge.com/report/866683/chatgpt-health-sharing-data

Subscribe to our newsletter to get updates delivered to your inbox