A New York Times article reports on a lawsuit by job seekers alleging that Eightfold AI’s résumé-screening and applicant-scoring system should be regulated under the Fair Credit Reporting Act (FCRA), similar to credit bureaus. The case argues applicants deserve disclosure of what data is collected and how rankings are produced, plus a way to dispute errors.

The New Gatekeeper: Hiring “Scores” Without Due Process

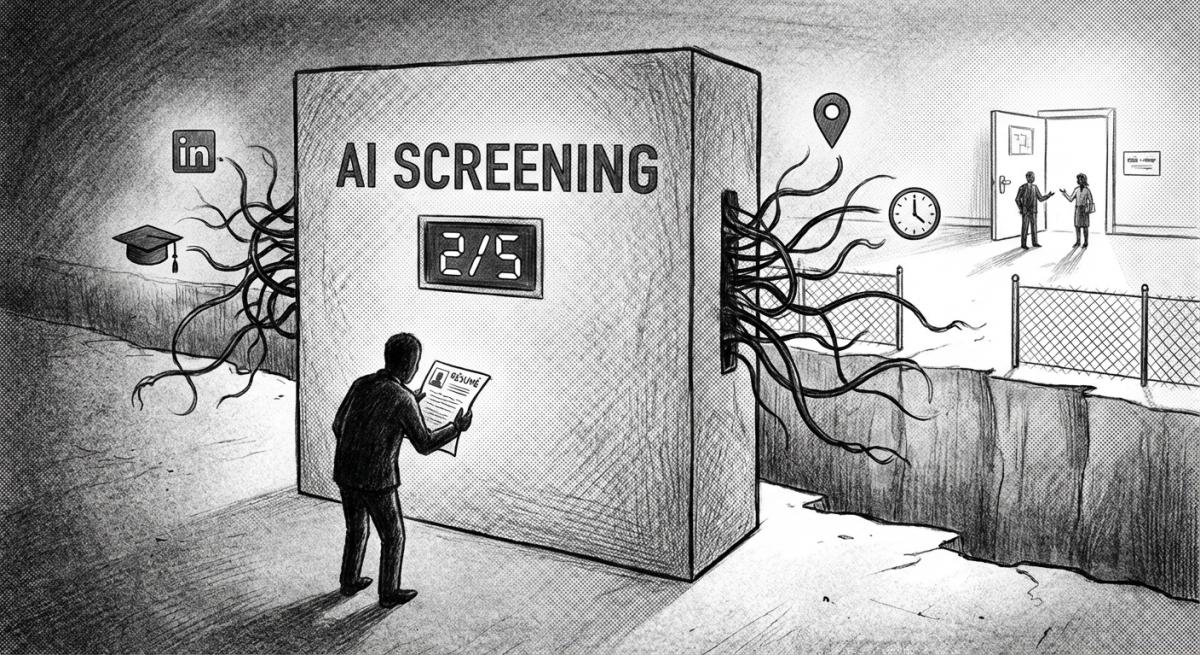

The article puts its finger on something many job seekers already feel: hiring has quietly turned into a two-step system, and the first step often isn’t human. Eightfold’s tool pulls from sources like LinkedIn and compares candidates to employer needs, then assigns a score from one to five, potentially determining who ever gets seen by a recruiter. In theory, that’s just efficiency: fewer hours sorting résumés, faster matches, a more organized pipeline.

In practice, it’s a form of automated gatekeeping with almost none of the guardrails we’ve learned (the hard way) to demand in other scoring systems. If you’re rejected by a credit bureau’s report, you have rights: you can see the file and dispute inaccuracies. If you’re rejected by an AI hiring score, you may get nothing, not even confirmation you were scored, let alone what data shaped the result. The lawsuit’s core claim is straightforward: when a company compiles dossiers about your “personal characteristics” and uses them to affect employment eligibility, the FCRA’s transparency and dispute mechanisms should apply.

Incentives: Scale First, Accountability Later

The business model described here practically guarantees opacity. Vendors sell “time and money saved,” and employers want plausible deniability: “the system ranks them like a recruiter would.” But automation changes the moral stakes. A single recruiter’s flawed judgment affects dozens of people; a flawed model can affect millions, silently and consistently.

The incentives tilt toward:

- Maximizing throughput: screen more applicants, faster, with fewer humans involved.

- Reducing employer liability optics: outsource the decision layer to a “neutral” tool, even when neutrality is unproven.

- Protecting proprietary systems: keep scoring logic secret because it’s “competitive advantage,” even when that secrecy prevents meaningful appeals.

That’s how you get the worst combination: a high-impact decision system treated like a low-stakes productivity app.

Bias by Design: And the Convenient Confusion Around It

One quote in the piece is unusually candid: these tools are “designed to be biased” in the sense that they’re built to find a certain kind of candidate. That’s true, and it’s also where harm hides. Selection is the point of hiring, but automated selection often converts messy human preferences into rigid statistical patterns. If the training data reflects an industry that historically favored certain schools, career paths, gaps-free résumés, or particular phrasing, the model can replicate that history at scale while insisting it never “uses protected characteristics.”

This is why the Workday lawsuit mentioned in the article matters. Even if a system doesn’t explicitly ingest race, age, or disability, it can still infer proxies (work history patterns, graduation dates, location, gaps, types of roles) and produce outcomes that disproportionately screen out protected groups. And because decisions happen “in the middle of the night,” people experience rejection as something almost bureaucratic, faceless, instant, and unchallengeable.

Privacy Creep: Hiring Is Becoming Another Data Extraction Industry

Eightfold’s marketing claim, profiles of more than a billion people, across geographies and professions, should trigger the same discomfort we now feel about social platforms. Not because data can’t be useful, but because “useful” quickly becomes “limitless.” The article’s plaintiffs aren’t just asking “why didn’t I get the job?” They’re asking: What is being collected about me, who gets it, and how do I correct it?

This is the familiar modern tech pattern: gather as much as possible, justify it later, and treat consent as a box to check. Hiring data is especially sensitive because it affects livelihood. When eligibility for work becomes a function of opaque data brokerage-like practices, we effectively normalize surveillance as a prerequisite for employment.

This Isn’t a “Tech Issue.” It’s a Basic Rights Issue.

Most people don’t experience AI hiring systems as innovation; they experience them as silence, endless applications, instant rejections, no explanation, no recourse. If this continues unchecked, we risk building a labor market where employment opportunity depends on unaccountable scoring systems the way credit access once did, but with fewer consumer protections and even less visibility.

At a human level, the response isn’t to reject technology, it’s to demand due process. Ask employers whether automated screening is used, and request feedback in writing when possible. Keep your own records, watch for patterns, and treat platforms’ “easy apply” funnels with healthy skepticism. And support regulatory efforts that force transparency, auditability, and a real right to dispute, because “computer says no” is not a hiring standard any society should accept.

Read the original article here if you want to learn more: New York Times.

Subscribe to our newsletter to get updates delivered to your inbox