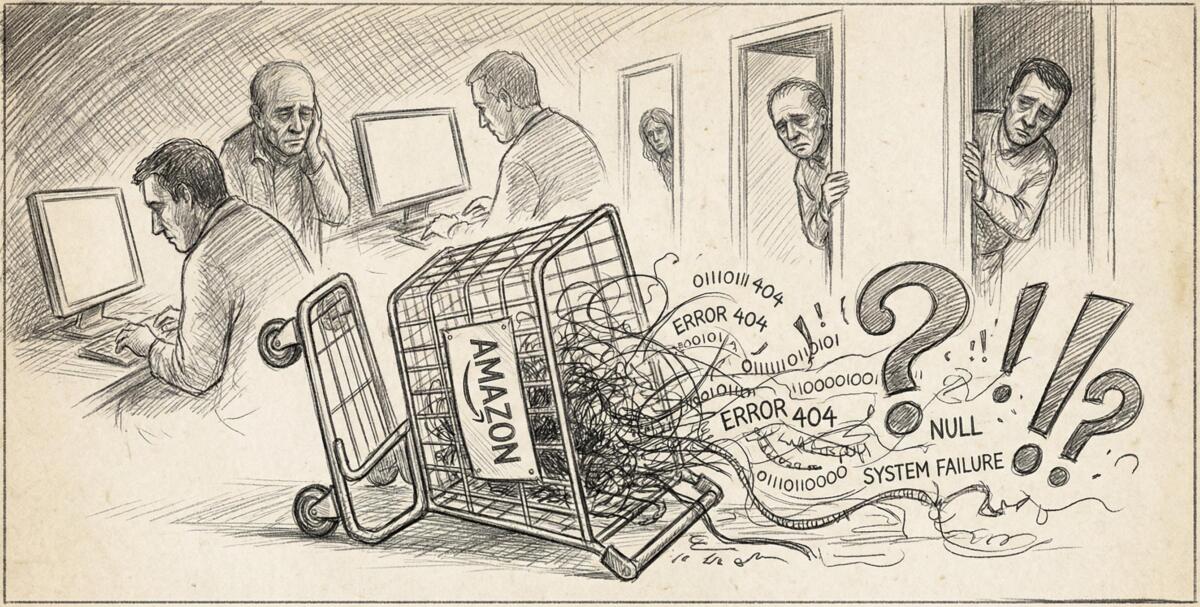

Business Insider published a very interesting article about Amazon’s recent technical meltdowns that cost the company millions of orders. In early March 2026, Amazon’s retail website suffered multiple major outages. One incident alone caused a 99% drop in orders across North America, resulting in 6.3 million lost orders. At least one of these disruptions was linked to Amazon’s AI coding assistant, Q.

When “Move Fast and Break Things” Literally Breaks Things

Amazon engineers have been using AI tools to write code faster than ever before. Sounds great in theory. AI helps you crank out more code, ship features quicker, get products to market faster. Efficiency! Innovation! The future!

Except there’s a problem. AI coding tools are what tech people call “non-deterministic.” That’s a fancy way of saying: ask the same question twice, get two different answers. It’s like asking your friend for directions and getting completely different routes each time. Sometimes that’s fine for brainstorming blog post ideas. It’s decidedly NOT fine when you’re managing product prices, delivery times, and transactions for one of the world’s largest retailers.

On March 2nd, customers across Amazon saw incorrect delivery times when adding items to their carts. Nearly 120,000 lost orders and roughly 1.6 million website errors. Amazon’s own internal review confirmed that their AI tool Q was “one of the primary contributors” to the disaster.

Then on March 5th, things got way worse. A 99% drop in orders. 6.3 million lost orders. In a single day.

The Avalanche Nobody Prepared For

AI helps engineers produce way more code than ever before, but nobody upgraded the systems that check whether that code is safe to deploy. It’s like giving someone a firehose and being surprised when the basement floods.

Amazon’s internal documents reveal some genuinely alarming gaps:

- No two-person approval requirements for code changes that could affect millions of customers

- No automated validation before deploying high-risk configuration changes

- Single engineers could execute “high-blast-radius” changes with basically no guardrails

- Data corruption that took hours to unwind

One internal document noted that “GenAI’s usage in control plane operations will accelerate exposure of sharp edges and places where guardrails do not exist.” Translation: AI is finding all the weak spots in our systems by breaking them spectacularly.

This isn’t just an Amazon problem. This is what happens across the entire tech industry when companies rush to integrate AI without thinking through the consequences. The pressure to ship faster, automate more, and cut costs creates perverse incentives. Why slow down to build better safeguards when your competitors are racing ahead? Why invest in boring infrastructure when you could be launching the next shiny AI feature?

The people paying the price? Customers who couldn’t complete orders. Small businesses relying on Amazon to fulfill sales. Workers whose performance metrics got dinged because the systems they depend on melted down.

When Predictability Becomes a Feature

Amazon’s response is telling. They’re implementing a 90-day “safety reset” that includes:

- Mandatory two-person reviews for code changes

- Required documentation and approval processes

- Automated checks that enforce reliability rules

- What executives call “controlled friction” in the code-change review process

Notice what they’re doing? They’re adding back human oversight. They’re slowing things down. They’re introducing “friction” into a process that AI was supposed to make frictionless.

Dave Treadwell, Amazon’s SVP of e-commerce services, explained they’ll combine AI-driven “agentic” tools with more predictable, rules-based “deterministic” systems. Because when you’re running a retail platform that processes millions of transactions daily, you need things to work the same way every single time. No surprises. No creativity. Just boring, reliable, deterministic outcomes.

AI is still a tool, and specifically a non-deterministic one. For tasks where you absolutely need predictable results, every single time, probabilistic AI systems are fundamentally the wrong choice. It’s like using a Magic 8-Ball to do your taxes.

When Your Shopping Cart Breaks Because AI Got Creative

Amazon will be fine. They’ll implement their new guardrails, stabilize their systems, and move on. But this incident reveals something bigger about how AI is being deployed across every industry: too fast, too carelessly, with too little thought about what happens when things go wrong.

If a company with Amazon’s resources and technical expertise can have AI tools cause this level of chaos, what’s happening at smaller companies with fewer safeguards? How many other systems are one bad AI suggestion away from catastrophic failure?

The risk isn’t that AI becomes sentient and takes over the world. The risk is that we keep deploying it in places where its fundamental unpredictability causes real harm to real people, and we only build safeguards after the damage is done.

Read the original article here if you want to learn more: Amazon Tightens Code Guardrails After Outages Rock Retail Business

Subscribe to our newsletter to get updates delivered to your inbox