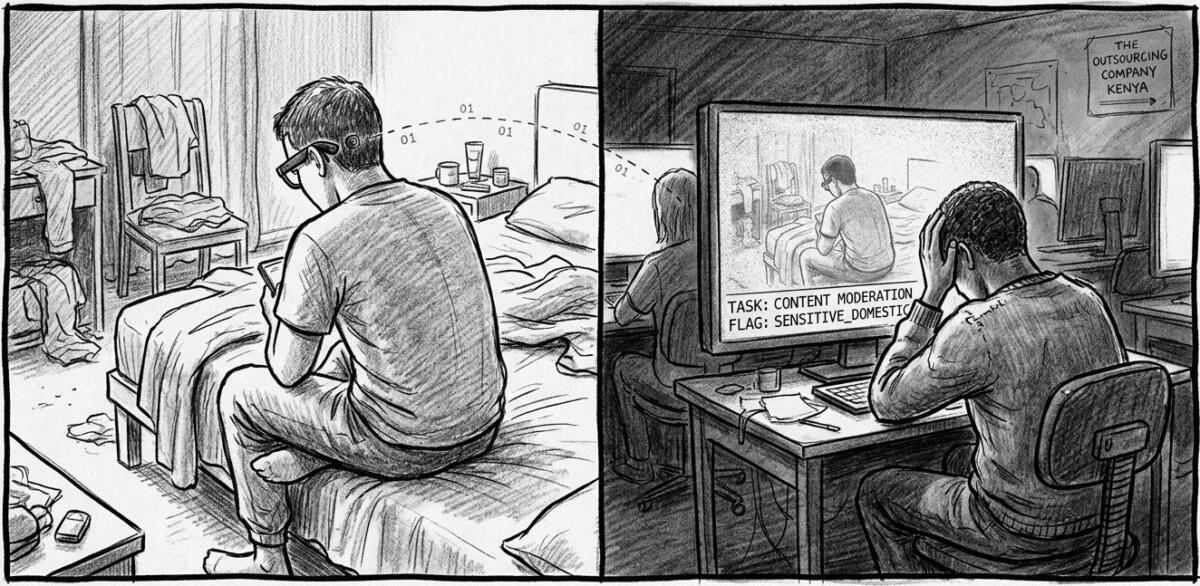

An investigation published in Svenska Dagbladet reveals that Meta’s AI-powered Ray-Ban smart glasses send intimate user footage, including bathroom visits, nudity, and sexual encounters, to low-wage data workers in Kenya who annotate the videos to train Meta’s AI systems. Workers at the subcontractor Sama report seeing bank details, private conversations, and deeply personal moments captured by users who may not realize their glasses are recording or that the footage is being reviewed by human eyes halfway around the world.

The Reality of “Smart” Glasses

Meta sold these glasses as an everyday assistant, a helpful voice that translates languages, answers questions, and captures memories hands-free. And sure, in theory, that sounds useful. The technology itself isn’t inherently evil. Voice translation for travelers? Great. Hands-free photography? Cool. The problem isn’t what the glasses can do. It’s what Meta is doing behind the scenes with the data they collect.

The glasses require constant internet connectivity to function. Every time you ask “Hey Meta” a question, your voice, your video, and whatever the camera sees gets processed through Meta’s servers. The company’s terms of service make it clear: your interactions “can be reviewed” by humans. But here’s the thing, most people have no idea that “review” means low-wage workers in Nairobi watching footage of you stepping out of the shower or having sex.

Meta frames this as necessary for “improving the product.” But really this is about training AI models on the cheap using a global workforce that has no power, no transparency, and no say in what they’re forced to watch. The people doing this work describe feeling uncomfortable, even violated, but they can’t speak up without losing their jobs. They’ve been told, just do the work, don’t ask questions, or you’re gone.

The Illusion of Control

Meta’s privacy policy sounds reassuring. It says you have control over your data. It says voice recordings are only saved if you agree. But dig a little deeper, and you’ll find the truth: you can’t turn off data sharing and still use the AI features. The glasses simply won’t work without sending your data to Meta’s servers.

In stores across Sweden, sales staff told reporters that “nothing is shared with Meta” and that “everything stays locally in the app.” Both statements are false. When the reporters tested the glasses themselves, they found the app was constantly communicating with Meta servers in Sweden and Denmark. There is no local-only mode. There is no opting out if you want the AI to function.

This isn’t transparency. It’s sleight of hand. Meta buries the truth in layers of legal jargon and multi-page terms of service that almost no one reads. The average person buying these glasses at an eyewear shop has no idea what they’re actually agreeing to. They think they’re getting a cool gadget. What they’re really getting is a surveillance device that feeds their most private moments into a global data pipeline.

The Hidden Workforce Training Your AI

Here’s where it gets worse. The footage captured by these glasses doesn’t just get processed by algorithms. It gets watched, labeled, and categorized by real people, specifically, thousands of data annotators working for a company called Sama in Nairobi, Kenya.

These workers describe seeing:

- People using the bathroom

- Couples having sex

- Bank cards and financial details

- Naked bodies

- Intimate conversations

One worker put it bluntly: “We see everything—from living rooms to naked bodies. Meta has that type of content in its databases.”

These aren’t volunteers. They’re low-paid contractors working under strict non-disclosure agreements. If they speak out, they lose their jobs and often their only source of income. They’re not there by choice. They’re there because this is one of the few jobs available in a country where economic opportunities are scarce. Meta and companies like Sama exploit that desperation.

Where Does the Data Actually Go?

Meta’s European executive told reporters it doesn’t matter where data is physically stored, as long as the legal protections are “equivalent” to those in Europe. But Kenya doesn’t have an EU-recognized data protection framework. The EU and Kenya are still in talks, and a formal agreement could take years.

So right now, Meta is transferring intimate footage from European users to a country with no legal safeguards in place. And they’re doing it quietly, buried in terms of service that most users never read.

Data protection experts are alarmed. Kleanthi Sardeli, a lawyer at the privacy advocacy group None Of Your Business, says users likely don’t realize the camera is recording when they activate the AI assistant. “Once the material has been fed into the models, the user in practice loses control over how it is used,” she says.

Petter Flink, an IT specialist at Sweden’s Authority for Privacy Protection, puts it even more bluntly: “The user really has no idea what is happening behind the scenes.”

F*&% Meta…Again

You might not own Meta’s smart glasses. You might never buy a pair. But this investigation exposes a much bigger problem: tech companies are building systems that quietly harvest our most intimate moments, ship that data across the world to underpaid workers, and call it “innovation.”

This is a reminder that when companies like Meta promise you “control” over your data, what they really mean is: you can use our product, but only if you agree to let us do whatever we want with what you share.

If this trend continues unchecked, we’re heading toward a world where every device you wear, every question you ask, and every room you walk into becomes data someone else owns. Not because technology requires it, but because companies have decided that’s more profitable than respecting your privacy.

Technology can be powerful and useful. But right now, it’s being shaped by people who see you as a data source, not a person. It’s time to take that power back.

Read the original article here if you want to learn more: Meta’s AI Smart Glasses and Data Privacy Concerns: Workers Say “We See Everything”

Subscribe to our newsletter to get updates delivered to your inbox