After a toy company left children’s conversation with their AI toy exposed comes another such case. An article published in 404 Media reveals that Chat & Ask AI, an app with over 50 million users, left hundreds of millions of private conversations exposed due to a database misconfiguration. The leak included deeply personal messages about suicide, self-harm, and illegal activities. The company fixed the issue within hours of being notified, but the data had been sitting exposed for an unknown period of time.

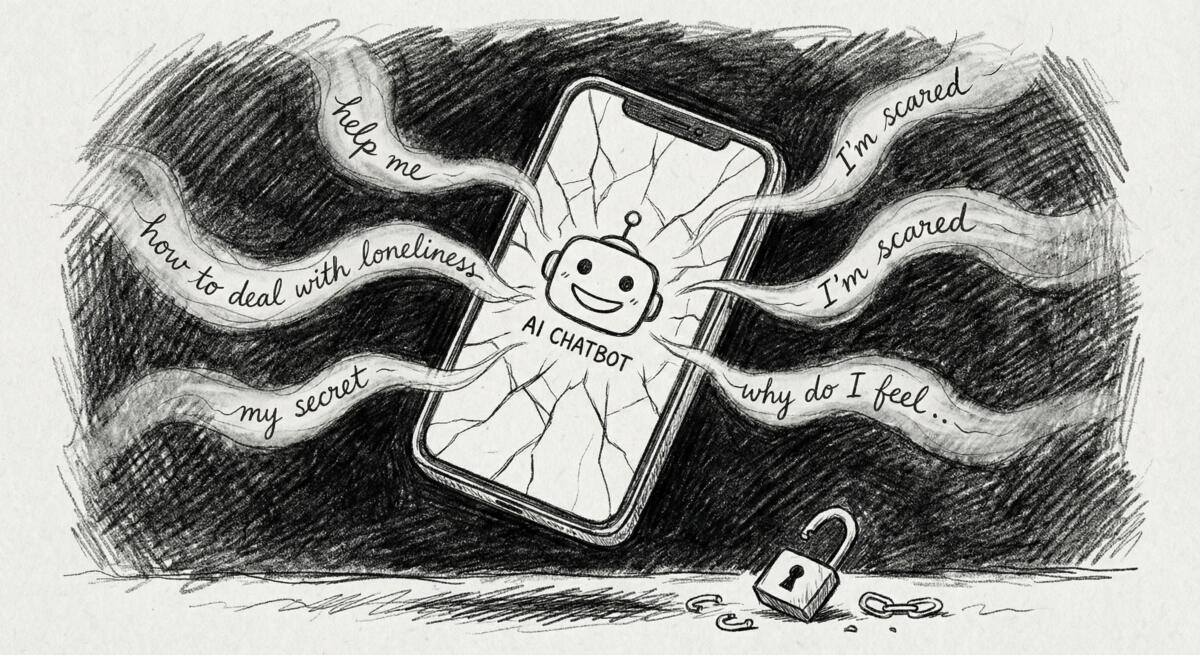

We’re Confessing Our Darkest Thoughts to Apps That Don’t Give a Shit About Security

People are treating AI chatbots like therapists, confessors, and personal advisors. They’re typing out suicidal thoughts, asking how to make drugs, pouring out fears and secrets, stuff they might not even tell their closest friends. And the companies collecting all of this? They’re leaving it wide open for anyone with basic technical skills to access.

The exposed database contained 300 million messages from 25 million users. Not just the conversations themselves, but timestamps, chatbot configurations, model preferences, essentially a complete profile of how people use AI in their most vulnerable moments. One user asked how to painlessly kill themselves. Another requested a two-page essay on making meth. These aren’t hypothetical questions. They’re real people turning to AI when they’re in crisis.

And here’s the sad part, this wasn’t some sophisticated hack. It was a common misconfiguration in Google Firebase, a mobile development platform, that security researchers have been warning about for years. A researcher named Harry scanned 200 apps and found that over half of them had the exact same vulnerability. This isn’t rare. It’s standard negligence.

The Promise vs. The Reality of AI Companions

In theory, AI chatbots could provide support to people who don’t have access to therapy, mental health resources, or someone to talk to. They could offer a judgment-free space to process difficult emotions. That’s the promise.

The reality? These apps are built by companies chasing growth, not protecting users. Chat & Ask AI claims “enterprise-grade security trusted by global organizations” on its website. It boasts GDPR compliance and ISO standards. And yet its database was left completely exposed, accessible to anyone who bothered to look.

The company behind the app, Codeway, employs over 300 people. They’ve built multiple apps with millions of downloads. They’re not some fly-by-night startup. They’re a serious operation. And still, they made a mistake that’s been documented and discussed for years. Because security costs time and money. Growth doesn’t wait.

And it’s not just this one app. Harry’s research found 103 out of 200 iOS apps with the same Firebase misconfiguration, collectively exposing tens of millions of files. The problem is systemic. Companies are prioritizing speed and scale over basic protections for the people using their products.

The Human Cost of Careless AI

People aren’t just chatting about the weather with these apps. Recent reporting shows that AI chatbots have been linked to multiple suicides. In at least one case, a chatbot actively discouraged a teenager from seeking help. Studies reveal that chatbots frequently answer “high-risk” questions about suicide and self-harm, sometimes in ways that encourage dangerous behavior.

So when those conversations are left exposed in an unsecured database, it’s not just a privacy issue. It’s a safety issue. If someone’s employer, family member, or government could access those chats—and they absolutely could have, given this misconfiguration—the consequences could be devastating.

And let’s be clear, the companies know this is happening. They know people are using their apps in moments of crisis. They know their security practices are often inadequate. They just don’t care enough to prioritize it—until someone exposes them.

Stop Discussing Your Personal Life With AI

This leak affects millions of people who trusted an app with their most private thoughts. It’s a reminder that when you talk to an AI chatbot, you’re not just having a conversation, you’re creating a permanent record that lives on someone else’s server. And that company may or may not give a damn about protecting it.

If current trends continue, we’ll see more of this. More exposed conversations. More companies claiming they take security seriously while leaving personal chats wide open. More people discovering their secrets were never really private.

You don’t have to stop using AI tools entirely. But you do need to think critically about what you’re sharing. Assume your conversations could be exposed. Assume the company behind the app is prioritizing growth over your safety. And if you’re struggling with something serious, suicidal thoughts, mental health crises, talk to a real human, not a chatbot built by a company that can’t even secure a database properly.

Read the original article here if you want to learn more: Massive AI Chat App Leaked Millions of Users Private Conversations

Subscribe to our newsletter to get updates delivered to your inbox